Jest 14.0: React Tree Snapshot Testing

One of Jest's philosophies is to provide an integrated “zero-configuration” experience. We want to make it as frictionless as possible to write good tests that are useful. We observed that when engineers are provided with ready-to-use tools, they end up writing more tests, which in turn results in stable and healthy code bases.

One of the big open questions was how to write React tests efficiently. There are plenty of tools such as ReactTestUtils and enzyme. Both of these tools are great and are actively being used. However engineers frequently told us that they spend more time writing a test than the component itself. As a result many people stopped writing tests altogether which eventually led to instabilities. Engineers told us all they wanted was to make sure their components don't change unexpectedly.

Together with the React team we created a new test renderer for React and added snapshot testing to Jest. Consider this example test for a simple Link component:

import renderer from 'react-test-renderer';

test('Link renders correctly', () => {

const tree = renderer

.create(<Link page="http://www.facebook.com">Facebook</Link>)

.toJSON();

expect(tree).toMatchSnapshot();

});

The first time this test is run, Jest creates a snapshot file that looks like this:

exports[`Link renders correctly 1`] = `

<a

className="normal"

href="http://www.facebook.com"

onMouseEnter={[Function bound _onMouseEnter]}

onMouseLeave={[Function bound _onMouseLeave]}>

Facebook

</a>

`;

The snapshot artifact should be committed alongside code changes. We use pretty-format to make snapshots human-readable during code review. On subsequent test runs Jest will simply compare the rendered output with the previous snapshot. If they match, the test will pass. If they don't match, either the implementation has changed and the snapshot needs to be updated with jest -u, or the test runner found a bug in your code that should be fixed.

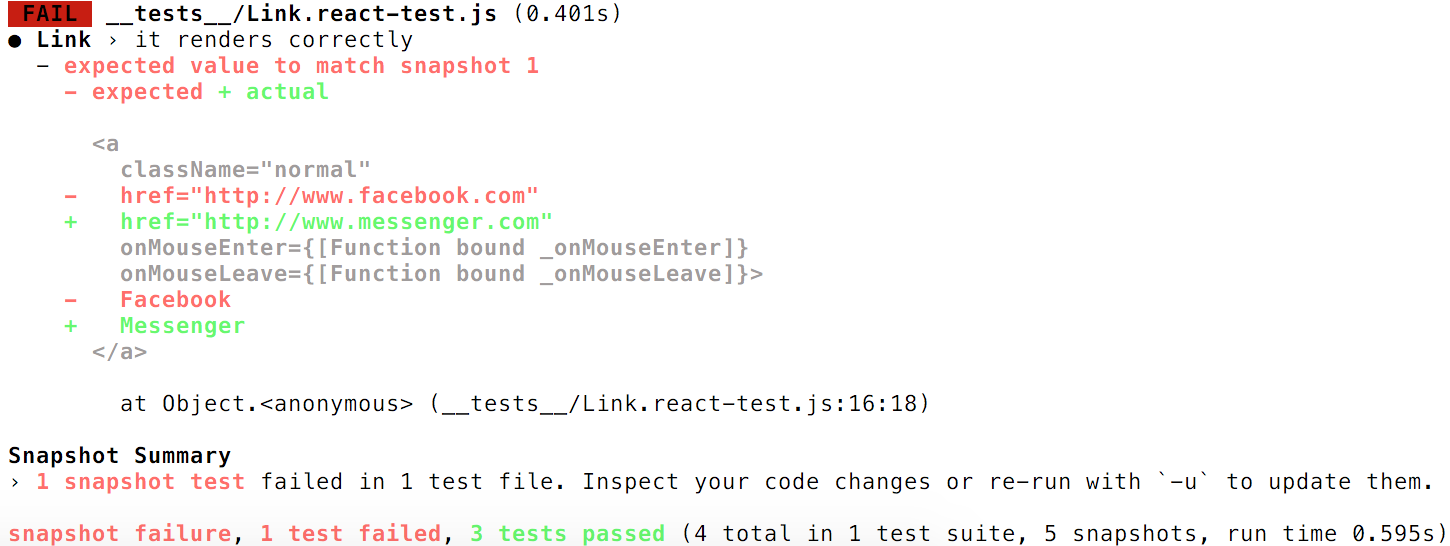

If we change the address the Link component in our example is pointing to, Jest will print this output:

Now you know that you either need to accept the changes with jest -u, or fix the component if the changes were unintentional. To try out this functionality, please clone the snapshot example, modify the Link component and run Jest. We updated the React Tutorial with a new guide for snapshot testing.

This feature was built by Ben Alpert and Cristian Carlesso.

Experimental React Native support

By building a test renderer that targets no specific platform we are finally able to support a full, unmocked version of React Native. We are excited to launch experimental React Native support for Jest through the jest-react-native package.

You can start using Jest with react-native by running yarn add --dev jest-react-native and by adding the preset to your Jest configuration:

"jest": {

"preset": "jest-react-native"

}

- Tutorial and setup guide

- Example project

- Example pull request for snowflake, a popular react-native open source library.

The preset currently only implements the minimal set of configuration necessary to get started with React Native testing. We are hoping for community contributions to improve this project. Please try it and file issues or send pull requests!

Why snapshot testing?

For Facebook's native apps we use a system called “snapshot testing”: a snapshot test system that renders UI components, takes a screenshot and subsequently compares a recorded screenshot with changes made by an engineer. If the screenshots don't match, there are two possibilities: either the change is unexpected or the screenshot can be updated to the new version of the UI component.

While this was the solution we wanted for the web, we also found many problems with such end-to-end tests that snapshot integration tests solve:

- No flakiness: Because tests are run in a command line runner instead of a real browser or on a real phone, the test runner doesn't have to wait for builds, spawn browsers, load a page and drive the UI to get a component into the expected state which tends to be flaky and the test results become noisy.

- Fast iteration speed: Engineers want to get results in less than a second rather than waiting for minutes or even hours. If tests don't run quickly like in most end-to-end frameworks, engineers don't run them at all or don't bother writing them in the first place.

- Debugging: It's easy to step into the code of an integration test in JS instead of trying to recreate the screenshot test scenario and debugging what happened in the visual diff.

Because we believe snapshot testing can be useful beyond Jest we split the feature into a jest-snapshot package. We are happy to work with the community to make it more generic so it can be integrated with other test runners and share concepts and infrastructure with each other.

Finally, here is a quote of a Facebook engineer describing how snapshot testing changed his unit testing experience:

“One of the most challenging aspects of my project was keeping the input and output files for each test case in sync. Each little change in functionality could cause all the output files to change. With snapshot testing I do not need the output files, the snapshots are generated for free by Jest! I can simply inspect how Jest updates the snapshots rather than making the changes manually.” – Kyle Davis working on fjs.

What's next for Jest

Recently Aaron Abramov has joined the Jest team full time to improve our unit and integration test infrastructure for Facebook's ads products. For the next few months the Jest team is planning major improvements in these areas:

- Replace Jasmine: Jasmine is slowing us down and is not being very actively developed. We started replacing all the Jasmine matchers and are excited to add new features and drop this dependency.

- Code Coverage: When Jest was originally created, tools such as babel didn't exist. Our code coverage support has a bunch of edge cases and isn't always working properly. We are rewriting it to address all the current problems with coverage.

- Developer Experience: This ranges from improving the setup process, the debugging experience to CLI improvements and more documentation.

- Mocking: The mocking system, especially around manual mocks, is not working well and is confusing. We hope to make it more strict and easier to understand.

- Performance: Further performance improvements especially for really large codebases are being worked on.

As always, if you have questions or if you are excited to help out, please reach out to us!